AI is transforming healthcare by assisting doctors, improving patient care, and optimizing clinical workflows. With 80% of hospitals using AI and annual investments exceeding $1 billion, the technology is reducing clinician workloads and enhancing decision-making. However, its rapid adoption raises ethical questions, such as accountability for errors and preventing biases in medical data.

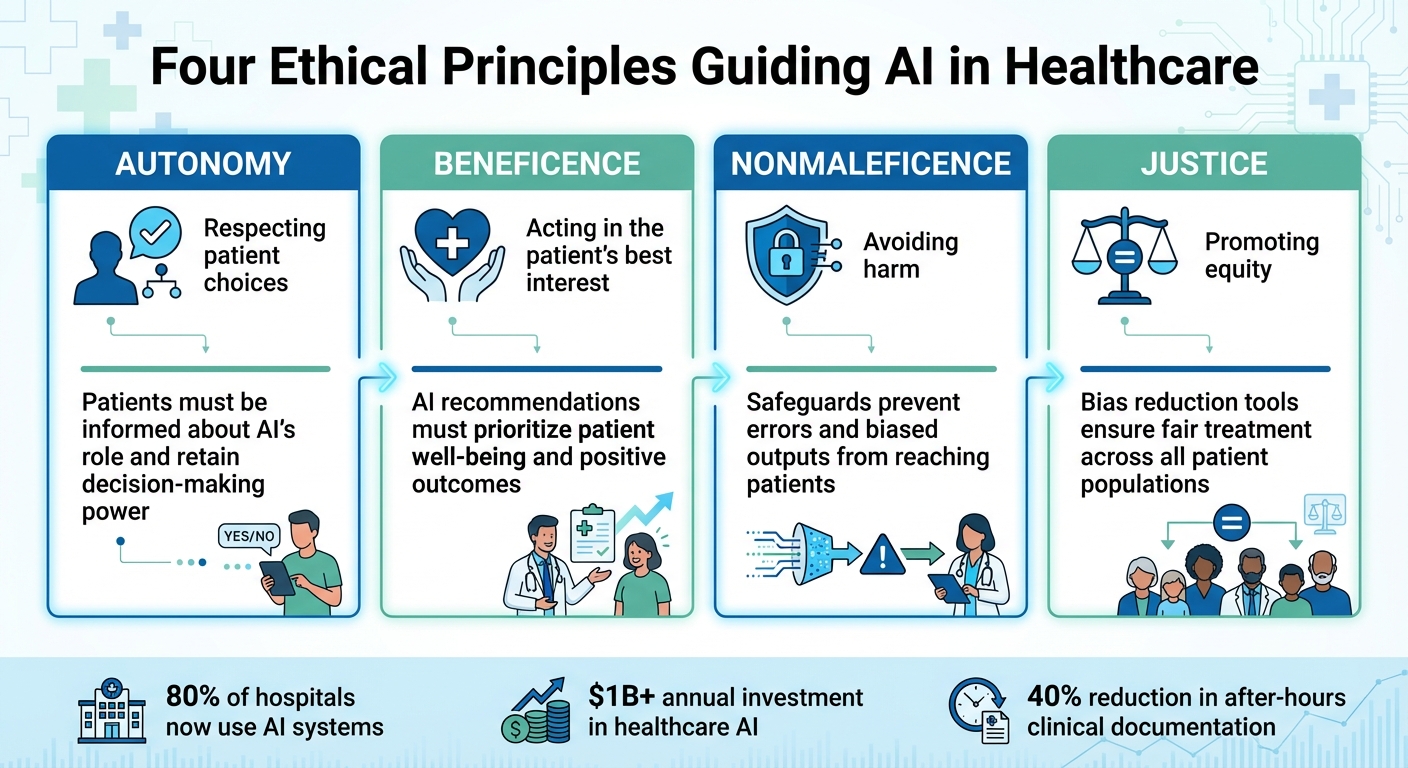

To ensure responsible use, AI in healthcare adheres to four ethical principles: autonomy (respecting patient choices), beneficence (acting in the patient’s best interest), nonmaleficence (avoiding harm), and justice (promoting equity). Tools like BondMCP integrate health data into context-aware systems, enabling tailored, transparent, and secure recommendations.

Key takeaways:

- Patient Autonomy: Patients must be informed about AI’s role and retain decision-making power.

- Bias Reduction: Safeguards like Healthcare AI Datasheets and Multi-Agent Systems address inequities in datasets.

- Privacy & Security: Features like data minimization and event-based audit trails protect sensitive information.

- Explainable Decisions: Transparent AI ensures patients and providers understand recommendations.

AI systems, guided by ethical frameworks and continuous monitoring, are reshaping healthcare by improving outcomes while maintaining accountability and trust.

Four Ethical Principles Guiding AI in Healthcare

The Ethics of AI as Clinical Decision Maker

sbb-itb-f5765c6

Ethical Principles That Guide AI Health Systems

AI systems in healthcare must follow clear ethical principles to build trust and ensure safe, fair, and secure care. These principles guide how AI interacts with data, patients, and healthcare providers. Three key principles are patient autonomy and informed consent, fairness and bias reduction, and privacy and data security. Together, they help create systems that prioritize patient well-being and accountability.

Patient Autonomy and Informed Consent

Patients have the right to know when AI is involved in their care and should have the option to accept or decline its use. Healthcare providers must clearly explain AI's role in diagnoses or treatments, empowering patients to make informed decisions through patient-centered treatment plans [6][7]. Dr. Ameera AlHasan, a Specialist Surgeon at Jaber Al-Ahmad Al-Sabah Hospital, emphasizes this by stating:

"Patients have the right to make choices and take their fate into their own hands after being well-informed" [7].

While AI can support decision-making, licensed professionals remain responsible for approving critical actions [4][8]. For patients who are unable to make decisions, AI can analyze electronic health records (EHRs) to estimate their preferences. This is particularly useful since studies show that healthcare providers fail to recognize patient incapacity 42% of the time, and human surrogates often misjudge treatment preferences in about one-third of cases [8]. Frameworks like MCP-I ensure AI operates within strict boundaries [1].

Fairness and Bias Reduction

AI systems can unintentionally reinforce healthcare inequalities if they rely on biased datasets or lack safeguards for equity. Tools such as Healthcare AI Datasheets document demographic data and highlight sampling issues to address potential biases [9].

Multi-Agent Systems (MAS) offer another layer of protection by assigning specialized agents - like "Transparency Agents" and "Ethical Advisors" - to oversee fairness and enforce policies [9]. In one comparison, MAS outperformed single-agent systems in mortality prediction accuracy (59% vs. 56%) while maintaining a transparency score of 85.5% [9].

To further ensure reliability, deterministic guardrails - rule-based checks - validate AI outputs before they reach patients or providers. Keith Dutton, VP of Engineering at Artera, explains:

"Prompt instructions are band-aids. The robust solution is controlling what data the tool exposes in the first place" [2].

Additional safeguards, like maintaining a server-side "source of truth", can catch errors or biased outputs. Event-based audit trails also create time-stamped records of AI actions, ensuring accountability and compliance with regulations [1][4].

Privacy and Data Security

Protecting patient data requires careful system design to minimize risks. Data minimization is a key strategy, allowing AI to access only the information it needs to perform its task, reducing the chance of leaks or misuse [2]. For example, unnecessary data fields can be removed entirely rather than relying on instructions to ignore them.

Granular access control further restricts AI to specific datasets or tasks. For instance, an AI agent might only have read-only access to lab results without permission to modify other records [1][3]. The Model Context Protocol–Identity (MCP-I) framework uses cryptographic tools to ensure every AI action is tied to an authorized individual [1].

| Security Feature | Purpose | Compliance Alignment |

|---|---|---|

| Layered Encryption | Separates PHI from test data and logs | HIPAA, SOC-2 [1] |

| Event-based Audit Trails | Creates traceable records of all agent interactions | GDPR, HIPAA, HITRUST [1][3] |

| Scoped Access | Limits AI to specific tasks and datasets | Data Minimization (GDPR) [1] |

| Deterministic Guardrails | Ensures factual accuracy in dates/times | Clinical Safety Standards [2] |

| MCP-I Tokens | Cryptographically ties agents to user identity | Identity & Access Management (IAM) [1] |

Healthcare organizations face ongoing challenges with data management. Over 60% of healthcare executives cite data silos as a major obstacle to effective AI integration, and nearly 80% of healthcare data remains unstructured [1]. Standardized protocols like the Healthcare Model Context Protocol (HMCP) use HL7 and FHIR standards to improve data exchange while enforcing strict access controls [1]. Early pilot programs using secure context protocols have even reported a 40% reduction in after-hours clinical documentation [1].

How AI Systems Make Ethical Health Decisions

AI systems are designed to make health-related decisions that align with ethical principles, balancing outcomes with accountability. These systems move beyond static predictions, using dynamic, context-aware approaches to better integrate data and provide transparent recommendations. This shift is reshaping how AI supports healthcare through data integration, contextual reasoning, and transparency.

Personalized Health Recommendations

AI leverages data from sources like genetics, lifestyle, environment, and clinical history to create tailored health interventions. This approach aims to improve treatment effectiveness while reducing potential side effects.

To address algorithmic bias, advanced AI systems combine insights from multiple models. By aggregating outputs, these systems reduce individual errors, achieving a level of accuracy comparable to expert judgment. For example, a 2025 study in the Strategic Management Journal analyzed how large language models (LLMs) ranked 60 business models compared to human experts. Single AI evaluations showed bias, but combining assessments from different LLMs produced results similar to those of human experts [11].

AI's ability to personalize care has already made a measurable difference. Tools like InnerEye have streamlined radiotherapy planning by cutting manual segmentation time by 90%, while IDx-DR has shown strong performance in detecting diabetic retinopathy [10].

Context-Aware Decision-Making

Traditional AI systems often work in isolation, analyzing data without considering the broader health context of a patient. Context-aware systems address this limitation by maintaining a continuous understanding of a patient’s health journey, clinical goals, and medical history across various specialties.

Platforms like BondMCP exemplify this approach. These systems allow AI to make decisions that consider a patient’s complete health profile and long-term objectives. For instance, a sleep tracker can inform a training coach, lab results can adjust supplement protocols, and longevity goals can guide real-time decisions. This shared context ensures AI agents operate in harmony, offering seamless personalization and automation.

The Model Context Protocol (MCP) serves as a universal interface, enabling AI to securely access real-time patient data from Electronic Health Records (EHRs) and lab databases. As Peter Horadan, CEO of Vouched, explains:

"MCP gives AI models a live, contextual view of patient status and clinical workflows... moving AI beyond analysis to direct operational support" [1].

Early trials of MCP-integrated tools have shown promising results, such as a 40% reduction in after-hours documentation for healthcare providers [1]. For developers, BondMCP offers a structured framework and software development kit (SDK) to build context-aware, interoperable tools without needing to recreate memory systems or toolchains for each use case. This unified context not only simplifies care but also ensures AI decisions remain accountable and transparent.

Explainable AI Decisions

In healthcare, transparency is critical. Patients and providers must understand the reasoning behind an AI system’s recommendations before acting on them. Explainable AI (XAI) systems address this by logging every step of the decision-making process, including the data used, modules involved, and clinical thresholds applied. This creates a detailed, machine-readable audit trail that mirrors the logic of human medical reasoning [12].

To enhance safety, deterministic guardrails are used to validate AI outputs before they reach patients. For instance, in February 2026, Artera introduced a validation layer for healthcare AI to ensure date and time accuracy. This system checks AI-generated dates against real calendars to catch errors. In one case, when an LLM incorrectly identified July 10th as a Thursday instead of a Wednesday, the guardrail flagged and corrected the mistake before the patient received the information [2].

Keith Dutton, VP of Engineering at Artera, highlights the importance of designing systems that inherently prevent errors:

"Don't trust the LLM to do the right thing with extra information… design your tools so the right thing is the only option" [2].

Additionally, systems maintain a server-side "source of truth" that operates independently of the AI’s conversational context. This ensures that sensitive actions - such as canceling appointments or modifying prescriptions - are validated against actual database records, preventing AI errors from impacting external systems [2]. Combined with event-based audit trails that log time-stamped, identity-bound records of every AI action, these safeguards support compliance with standards like HIPAA, GDPR, and FHIR [1][12].

Monitoring and Improving AI Ethics Over Time

Creating ethical AI systems in healthcare isn’t a one-and-done deal - it requires constant evaluation. As new challenges arise, regulations shift, and real-world feedback rolls in, these systems need to adapt. This ongoing effort ensures that AI recommendations stay accurate, fair, and aligned with the latest healthcare standards. Regular monitoring strengthens the ethical foundation of AI in this field, keeping it reliable and relevant.

User Feedback and Algorithm Updates

Feedback from patients and healthcare providers plays a huge role in refining AI algorithms. By analyzing aggregated data and user reports, organizations can identify areas where improvements are needed. For example, in March 2026, Artera's engineering team, led by Keith Dutton and Anav Sanghvi, introduced a validation layer to scan every AI-generated response for accuracy. This update prevents errors in tasks like scheduling. As Dutton and Sanghvi explain:

"Prompt instructions are band-aids. The robust solution is controlling what data the tool exposes in the first place" [2].

For high-risk tasks, human oversight provides an additional layer of safety. AI systems can be programmed to require human approval - whether from administrators or clinicians - before making critical decisions, such as onboarding a patient or adjusting treatment plans. This approach reinforces accountability within AI systems and ensures human judgment remains part of the process [1][5].

Oversight Boards and Compliance Monitoring

Strong oversight and real-time compliance checks are essential for keeping AI systems aligned with ethical standards. Ethics committees and monitoring systems ensure that platforms operate responsibly over time. Effective oversight boards bring together experts from various fields, including technology, law, risk management, social sciences, and ethics, to provide a comprehensive view of AI's impact. Despite its importance, only 29% of organizations currently have thorough AI governance plans, even though 60% of legal and compliance leaders identify technology as their top risk concern [14].

To address this gap, many organizations are adopting certifiable frameworks like ISO/IEC 42001 and the NIST AI Risk Management Framework. HITRUST-certified environments using the AI Assurance Program for risk management have reported a breach-free rate of 99.41% [6]. These frameworks emphasize real-time monitoring rather than relying on outdated quarterly reviews. As Brian Besanceney, Board Chair at Orlando Health, points out:

"Quarterly board cycles don't match the tempo of AI" [13].

Tools like BondMCP support this real-time approach by offering event-based audit trails that are time-stamped and tied to specific identities for every AI action. This level of auditability ensures compliance with HIPAA, GDPR, and FHIR standards, while also helping healthcare teams trace how AI systems make decisions across interconnected data sources [1]. Such oversight builds on earlier ethical frameworks, ensuring accountability and transparency throughout the AI lifecycle. By doing so, organizations can shift from reactive compliance to proactive ethical leadership.

Conclusion

Ethical AI in health optimization isn't just about crafting smarter algorithms - it’s about building systems that are accountable, transparent, and work seamlessly together. By prioritizing patient autonomy, reducing biases, safeguarding privacy, and ensuring ethical clinical decisions are explainable, AI can play a meaningful role in improving healthspan and longevity. However, tackling real-world challenges, like fragmented data, is essential to achieving this vision.

Currently, over 60% of executives point to data silos and unstructured information as major roadblocks, with nearly 80% of healthcare data inaccessible to traditional systems [1]. This fragmentation forces AI systems to work independently, often delivering conflicting advice and missing opportunities to provide better, more informed health insights.

Platforms such as BondMCP address these challenges by unifying scattered health data. They create an intelligence layer that aligns AI agents, ensuring precise, coordinated decision-making. This approach not only synchronizes diverse data streams for consistent, real-time insights but also enforces strict accountability and identity-based safeguards. Early clinical trials of AI agents using such systems have already shown promising results, including a 40% reduction in after-hours documentation for healthcare providers [1]. This demonstrates that ethical AI can improve both patient outcomes and operational workflows.

As these systems are integrated into real-world healthcare, it’s clear that ethics and functionality must go hand in hand. As Peter Horadan, CEO of Vouched, aptly put it:

"The future of healthcare AI is not just intelligent - it's accountable."

FAQs

Who is legally responsible if an AI recommendation harms a patient?

Legal responsibility for harm resulting from an AI recommendation remains a gray area. That said, ethical standards emphasize the importance of implementing safeguards, ensuring responsible usage, and maintaining oversight. In practice, accountability often involves collaboration between healthcare providers, developers, and regulators to minimize risks and promote the correct application of AI in healthcare.

How can patients opt out of AI and still get quality care?

Patients have the option to opt out of AI-driven healthcare by relying on their rights to informed consent and privacy. Ethical guidelines are in place to protect patient autonomy, ensuring that individuals can choose not to involve AI in their care. Regulatory organizations emphasize the importance of transparency, and traditional care options led by human clinicians remain accessible for those who prefer them. Additionally, frameworks like the Model Context Protocol (MCP) are designed to promote secure and transparent AI use, empowering patients to maintain control over their data and healthcare decisions.

How do audit trails prove what an AI did and why?

Audit trails provide a clear record of what an AI system did and why it made certain decisions. They document key details like the data it processed, the algorithms it applied, and the actions it took. This transparency allows for a thorough review of the system's decisions, helping ensure accountability and adherence to ethical standards, especially in health-related contexts.